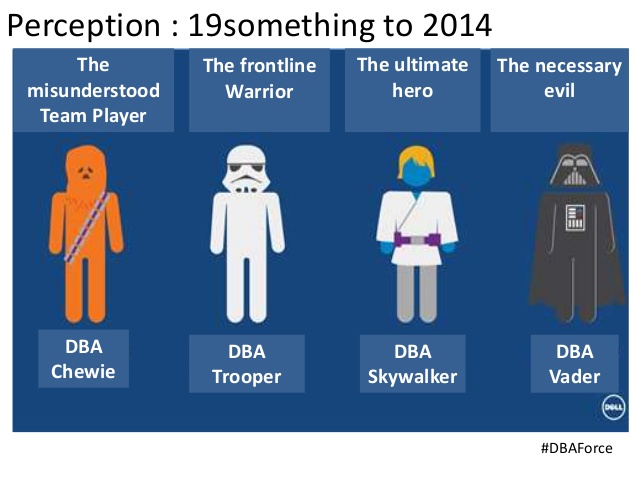

“Database administration is a dying breed; don’t go there“; that was the advice I received twenty-one years ago. It is a popular idea that spring up from time to time. The latest argument came from the cloud: database services are well-automated so who needs a good DBA?

I have seen two main arguments for the diminishing role of the DBA which i have seen repeated in blogposts and first hand conversations.

First: new technology makes the DBA obsolete. The latest databases are self tuned, the administration is automated, relational databases will be taken over by NoSQL or Hadoop, MongoDB, XML, Object Oriented databases, Cloud.

This argument fades away as soon as that new technology falls from its hype cycle. NoSQL didn’t replace the RDBMS, administration tasks are more difficult in an ever increasing landscape, the cloud merely shifts the work to optimalisation, capacitymanagement and tasks higher in the value chain.

The second argument stems from an underestimation of the DBA work: “We don’t need DBA’s because our developers know enough about databases“. I have heard similar statements during job interviews. When I asked why a DBA role considered by this company I was told that “the developers don’t have time anymore for DBA work“. This was a softwarehouse where no one needed a DBA until they realised they NEEDED a DBA. A need for a firefighter role to fix the datamodel, performance or datacorruption. A need for a firefighter that would silently do his work without causing any delay in the software build process, bring about fundamental changes in the structure without using up other resources. There was no ambition to raise the maturity level of the buildprocess, no vision on operational intelligence or business intelligence: innovation was confined to application features. For efficiency and control over the buildprocess they used Scrum, that would suffice.

There is one funny in that job interview I would like to share with you. After hearing their situation, I asked them if they have a lot of incidents now and if they thought that was part of the deal of writing software. I forgot the exact answer they gave, but I didn’t forget that the IT manager interviewing me was called away ..… for an incident.

I concluded the interview without seeing him again.

To advocate or not?

SQLserver guru Brent Ozar had an encouter with a CTO who said “I thought I’d save money in the cloud by not having a DBA, but what I’m learning is that in the cloud, I actually get a return on my DBA investments.” Surely, for performance projects in the cloud, picking up the euro’s is visible for everyone. But streamlining and compacting a databaselandscape for better agility is reserved for good technical leadership who is aiming for a mature company. Central question for a company is: how do you see your DBA?

Uwe explains traceflag 1117 with cups of water

Uwe explains traceflag 1117 with cups of water